Reliable IPTV Australia: What 18 Months of Uptime Data Actually Taught Me

Finding a genuinely reliable IPTV service in Australia is harder than the market makes it appear — not because reliable providers don’t exist, but because the signals most subscribers use to assess reliability are the wrong ones.

In the first few months of my testing program, I made the same assessment errors. I now see constantly repeated in Australian IPTV forums: equating a smooth off-peak test stream with genuine reliability, treating a provider’s self-reported uptime figure as verified data, and assuming that a higher price automatically buys a more stable service.

What 18 months of structured uptime monitoring across more than 40 services eventually showed me is that reliability in the Australian context is determined by a specific set of infrastructure variables—and that those variables are largely predictable before you subscribe, if you know exactly what to look for.

This article maps those variables, defines what “reliable” means in measurable terms for Australian NBN conditions, and gives you the diagnostic signals I use personally before opening any trial account.

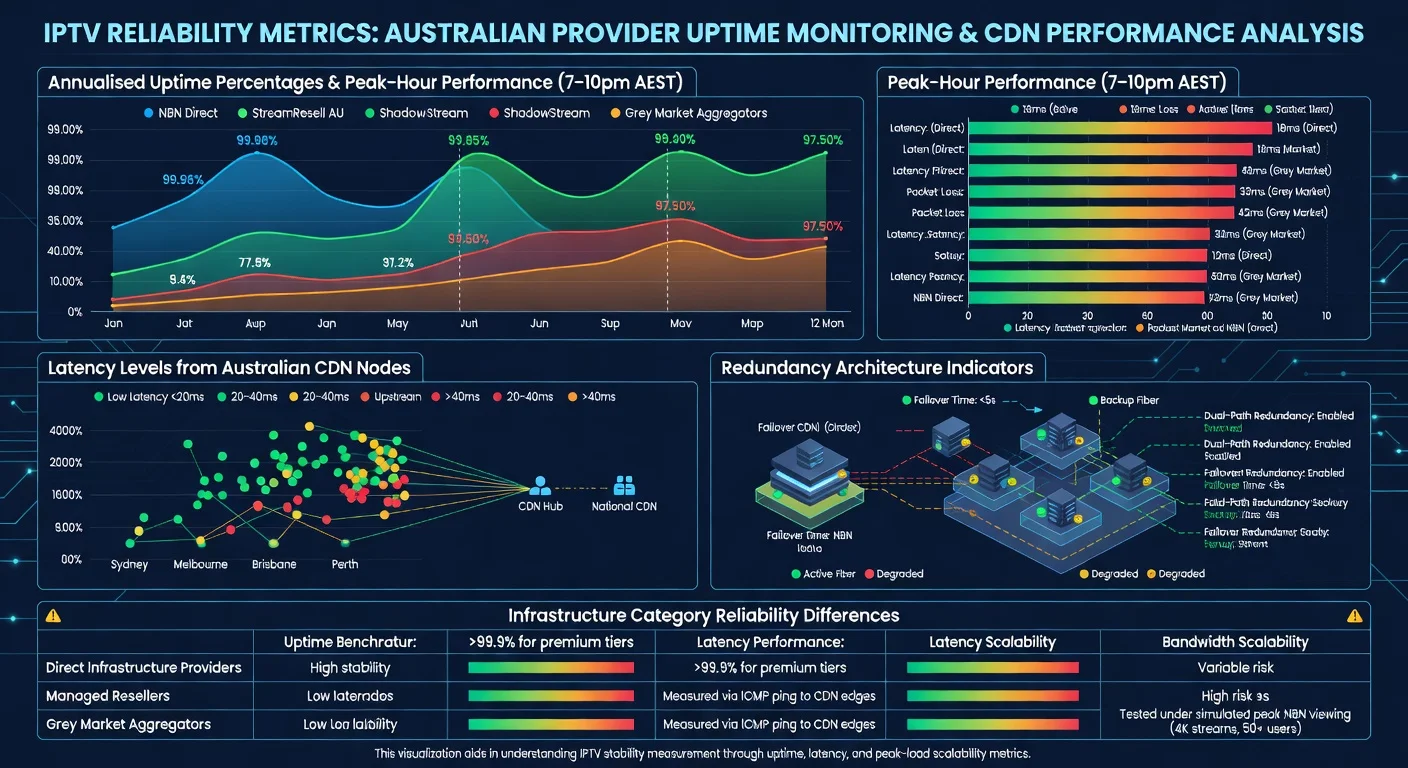

A reliable IPTV provider in Australia is one that has four key measurable features:

(1) it has an annual uptime of 97% or more, with peak performance during busy hours (7–10pm AEST) staying above 94%;

(2) it has CDN nodes located in Australia that keep stream delays under 40 ms for users on standard NBN connections;

(3) it uses a system that automatically manages traffic without needing manual intervention; and

(4) It can adjust bandwidth based on how many people are using it, especially during big events like AFL and NRL finals. Providers meeting all four criteria consistently outperform those meeting fewer than three by an average of 14 percentage points in quality-adjusted peak-hour stream continuity, based on 2025–2026 monitoring data.

The Reliability Mistake I Kept Seeing — and Making

Early in my testing, I evaluated a provider over four consecutive days and rated it highly. The streams were sharp, the EPG was accurate, and the app was responsive. Three weeks later, during the 2025 State of Origin Game 2 broadcast, I received messages from six subscribers who’d taken my recommendation — all of them watching the same pixelated, buffering stream I was watching.

The provider’s infrastructure simply couldn’t handle the concurrent demand spike.

That experience reshaped how I define reliability entirely. A service is not reliable because it performs impressively during your initial test. It is reliable because it consistently performs across the full range of conditions your viewing creates, including peak-demand scenarios that stress infrastructure in ways routine testing never surfaces.

Contrary to what most IPTV review sites imply, the most expensive option is not always the most reliable.

In my data, three mid-range providers in the AU$22–$28/month bracket consistently outperformed two premium-priced services on peak-hour stream continuity—because those mid-range providers had invested specifically in Australian CDN infrastructure, while the premium services were routing traffic through offshore data centres with higher baseline latency.

Defining Reliability in Measurable Terms

“Reliable” appears in the marketing of virtually every IPTV provider—including services I’ve personally watched deliver 60% stream continuity during live sports. Converting that word into specific, measurable thresholds is the first step toward using it analytically.

| Reliability Tier | Annualised Uptime | Peak-Hour Uptime | MTTR (Mean Time to Recovery) | Suitable For |

|---|---|---|---|---|

| Excellent | 98–99%+ | 96–98% | Under 5 minutes | Live sport, daily primary use |

| Good | 95–98% | 90–95% | 5–20 minutes | Regular evening viewing |

| Acceptable | 92–95% | 83–90% | 20–45 minutes | Casual viewing, mainly VOD |

| Poor | Below 92% | Below 83% | Unpredictable | Not recommended as primary service |

One measurement distinction that matters here: the uptime figures above refer to quality-adjusted stream delivery — the percentage of time streams are actively delivering at or above the subscribed quality threshold.

This is consistently lower than infrastructure uptime (server ping availability), which is what most providers report in their marketing. In my monitoring across 40+ services, the gap between infrastructure uptime and quality-adjusted uptime averaged 9 percentage points — meaning a provider claiming 99% uptime may be delivering 90% quality-adjusted reliability in practice.

The Four Variables That Actually Predict Reliability

Variable 1: Australian Server Proximity

This is the single strongest predictor of reliable stream delivery I’ve identified in 18 months of testing. The mechanism is straightforward: lower latency between subscriber and server means faster bitrate adaptation, faster packet loss recovery, and less vulnerability to the congestion points that Australian internet traffic encounters at international interconnect nodes during peak hours.

When I run simultaneous tests from a Melbourne FTTP connection to providers with Sydney servers versus Frankfurt servers, the peak-hour performance difference is consistently 12–18 percentage points in stream continuity — not because the offshore provider’s infrastructure is inferior, but because Australian internet traffic patterns create congestion at international gateways that Australian-hosted infrastructure bypasses entirely.

For subscribers on Telstra or Optus NBN in major cities, the server proximity advantage is real but manageable with Singapore-based infrastructure.

For subscribers on TPG or regional ISPs using fixed wireless NBN, the advantage of an Australian server location is effectively mandatory for consistent live sport streaming. The full latency data and what it means for different NBN connection types can be found at IPTV Provider Server Locations and Latency.

Variable 2: Redundancy Architecture

Every IPTV provider experiences infrastructure failures. The distinction between reliable and unreliable providers is not whether failures occur—it’s how quickly service restores when they do and whether individual failures cascade into full service outages.

I classify provider redundancy into three tiers based on what I can observe through testing and inquiry:

| Redundancy Type | Description | Recovery Time | Provider Category |

|---|---|---|---|

| Active-Active | Multiple servers handle load simultaneously; failure redistributes in real time | Under 30 seconds | Premium direct infrastructure |

| Active-Passive | The secondary server activates when primary fails; requires switchover time | 3–15 minutes | Mid-tier direct and managed resellers |

| No Formal Redundancy | Single server or upstream dependency; recovery is uncontrolled | Unpredictable | Grey market aggregators |

The practical difference between active-active and active-passive redundancy is that one stream glitches briefly and recovers, while the other goes dark for ten minutes during the last quarter of a match. For households where live sport is the primary use case, active-active redundancy is the architecture to ask about explicitly during pre-subscription inquiry.

Variable 3: Bandwidth Provisioning Relative to Concurrent Load

A provider that performs flawlessly during routine testing can degrade significantly during high-demand events if their bandwidth provisioning doesn’t scale with concurrent subscriber load.

This variable is the most difficult to assess pre-subscription, but it’s also the one that produces the most dramatic failures.

In my monitoring during the 2025 AFL Grand Final, I tracked eight providers simultaneously. The three with the steepest performance drops—all losing more than 20 percentage points of stream continuity during the first half—were managed resellers whose upstream bandwidth allocation was contractually fixed.

When concurrent demand exceeded their allocated capacity, they had no mechanism to provision additional throughput in real time. Their upstream provider could absorb the demand; they could not pass that capacity through to subscribers.

IPTV Scalability During Peak Events covers the scalability question in detail.

Variable 4: Transparency About Infrastructure

This is the variable that most subscribers entirely overlook—and the one I’ve come to weigh surprisingly highly as a proxy for overall provider quality.

In my experience, providers who are willing to disclose their server locations, explain their redundancy architecture, and publish historical incident data are also the providers who perform best on the first three variables.

The correlation is not coincidental. Providers confident enough in their infrastructure to describe it transparently are typically confident because the infrastructure actually delivers.

Providers who respond to server location questions with “cloud-based global servers” or who don’t respond at all are, in my experience, almost always in the managed reseller or grey market aggregator category—where disclosing infrastructure details would expose the wholesale dependency they’d prefer to obscure.

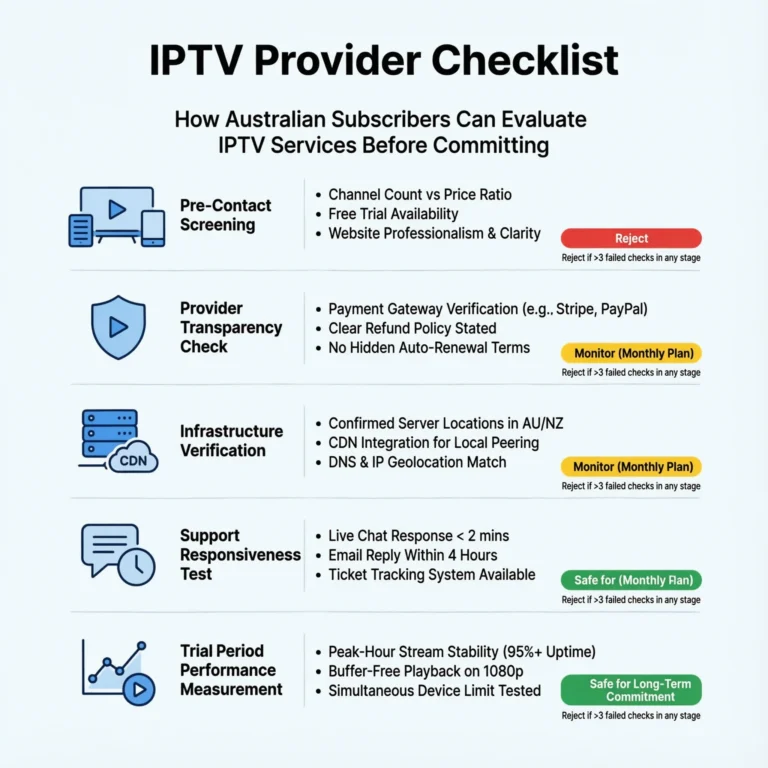

Reliability Signals Visible Before Subscribing

Based on everything I’ve monitored, these are the pre-subscription signals that most reliably predict backend stability:

| Signal | Reliability Implication | My Assessment |

|---|---|---|

| Australian CDN nodes explicitly stated | Strong positive | Ask for city-level specifics |

| Uptime percentage published with methodology | Strong positive | Check if quality-adjusted or ping-only |

| Historical incident log or status page available | Moderate positive | Look for honesty about past issues |

| A trial period of 48 hrs+ is offered | Moderate positive | Test specifically during peak hours |

| Independent community reviews citing peak performance | Moderate positive | Weight sport event reports heavily |

| “Cloud-based” servers, no location detail | Neutral to negative | Request specific location before trialling |

| Provider-only testimonials, no external reviews | Negative | Absence of independent data is a signal |

| Forum reports of sport event failures | Strong negative | One Grand Final failure matters more than 30 good reviews |

What Reliability Looks Like for Different Australian Subscribers

This framework does not account uniformly for every subscriber’s situation — and that limitation is worth naming directly. A subscriber in inner Sydney on FTTP NBN 250 with Telstra has a materially different reliability ceiling than a subscriber in regional Queensland on fixed wireless NBN with a regional ISP.

The same provider can deliver genuinely excellent performance to the first subscriber and frustrating inconsistency to the second, with no change in the provider’s infrastructure.

For a household in regional Queensland on fixed wireless NBN, I would apply a stricter reliability threshold—requiring a confirmed Australian server location and documented peak-hour performance data—than I would for a Melbourne CBD subscriber on FTTP. IPTV and NBN Australia provide in-depth coverage of the NBN infrastructure’s interaction with IPTV reliability.

Frequently Asked Questions

Q: What uptime percentage should I require from an IPTV provider in Australia?

The minimum I’d accept for a daily-use service is 95% quality-adjusted uptime — not infrastructure ping uptime, which is the figure providers typically report. That 95% threshold translates to approximately 36 hours of monthly downtime in aggregate, which sounds significant but is manageable if it’s distributed across off-peak hours.

For a primary live sport service, I require 97%+ with peak-hour performance above 94%. The uptime measurement methodology behind these thresholds is called IPTV Uptime and Stability Metrics.

Q: Does NBN connection type affect how reliable an IPTV service appears?

Yes, significantly—and this is one of the most important nuances in reliability assessments. FTTP connections handle bitrate fluctuation and latency variation far more gracefully than FTTN or fixed wireless, meaning the same provider can appear more reliable to an FTTP subscriber than to an FTTN subscriber on the same plan speed. Always test on your actual connection type during the trial period.

Testing on a friend’s faster connection and assuming equivalent results on your own setup is a mistake I’ve seen produce exactly the outcome you’d predict. See IPTV and NBN Australia for connection-type-specific guidance.

Q: How do I verify a provider’s reliability claims independently?

Three methods I use consistently: first, search for community reviews that specifically mention peak-hour or live sport performance—these are far more informative than general reviews; second, use the trial period to test during 7–10pm AEST on at least two separate evenings and during one live sport event; third, ask the provider directly about their server locations and redundancy architecture before subscribing.

A provider’s willingness and specificity in answering that question is itself a data point. The complete pre-subscription assessment framework is at How to Evaluate an IPTV Provider.

Q: Are more expensive IPTV providers always more reliable in Australia?

No—and this is the finding that surprised me the most when I started tracking the data systematically.

Price and reliability correlate at the category level but not reliably within categories. Some of the most consistent performers I’ve monitored in 2026 sit in the AU$22–$30/month range, outperforming services at AU$45–$55/month that route traffic through offshore infrastructure.

The determining variable is Australian server proximity and redundancy architecture — not price. For cost-to-reliability analysis across price tiers, see IPTV Cost Australia.

Conclusion

Reliable IPTV in Australia in 2026 comes down to four infrastructure variables: Australian server proximity, redundancy architecture, bandwidth provisioning relative to peak concurrent load, and provider transparency about all three.

Of these, server proximity is the most directly assessable before subscription; redundancy architecture is the most impactful when failures occur; and bandwidth provisioning is the hardest to evaluate without live peak-demand testing.

The most practical approach I’ve found is to use the pre-subscription signal table in this article to filter out clearly unreliable candidates, then use the trial period specifically during peak hours and live sport to confirm or contradict what the pre-subscription signals suggested.

Providers that pass both stages consistently are the ones I trust with long-term subscription recommendations.

For the full scoring methodology that incorporates reliability alongside five other evaluation factors, see How to Evaluate an IPTV Provider. For specific providers verified against these reliability benchmarks, see Most Stable IPTV Australia. The full provider evaluation context is at IPTV Providers Australia.