Introduction

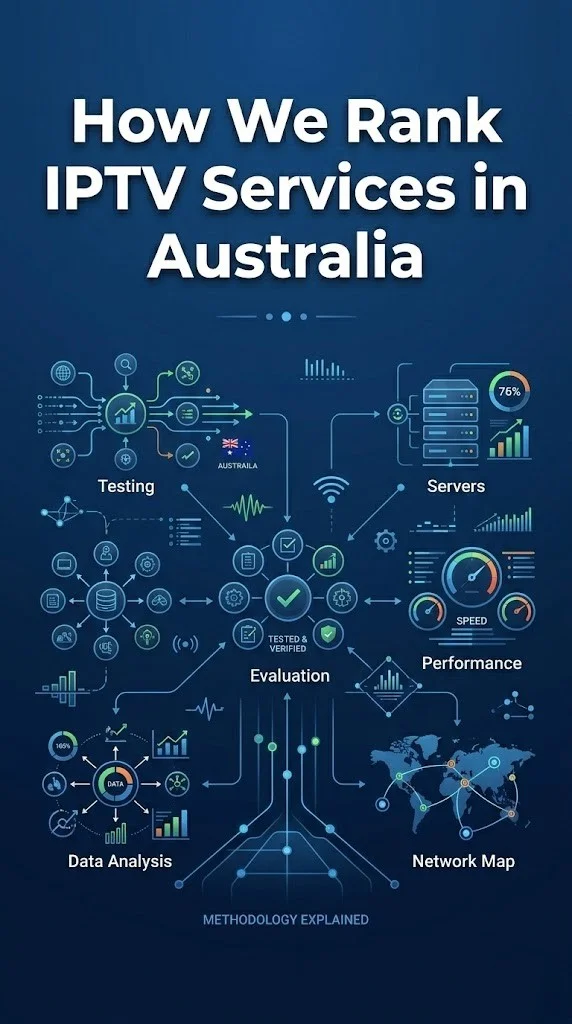

We rank IPTV services for Australian viewers using a structured methodology based on five weighted criteria tested during prime-time viewing hours on Australian NBN connections: channel reliability (30% weight), EPG quality (20%), sports stability (20%), catch-up functionality (15%), and server infrastructure proximity (15%). Every assessment is conducted during the peak hours of 7-10 PM AEST because off-peak performance does not predict the viewing experience that matters—your evening television.

To rank IPTV services for Australian viewers, we focus on five important factors: how reliable the channels are during peak hours (30%), how accurate the EPG is for the AEST timezone (20%), how stable the sports broadcasts are during live games (20%), how well the catch-up TV feature works (15%), and how close the CDN servers are to Australia (15%). We test all of this

Transparency in evaluation methodology matters because the IPTV market lacks the independent review infrastructure that other consumer products enjoy. Understanding the assessment process enables you to critically evaluate our recommendations and use the same framework to test any service independently.

For our service evaluations based on this methodology, see our Best IPTV Australia guide.

What Are Our Five Evaluation Criteria?

1. Channel Reliability (30% Weight)

Channel reliability measures what percentage of listed channels actually work during peak viewing hours. We test 50 channels across all major categories (sports, entertainment, news, kids, and international) at 8:00 PM AEST and record working/non-working status.

Scoring: 95%+ working = Excellent. 90-94% = Good. 85-89% = Adequate. Under 85% = Poor.

2. EPG Quality (20% Weight)

EPG quality assesses timezone accuracy, specifically Australian Eastern Standard Time (AEST) and Australian Eastern Daylight Time (AEDT), data coverage (percentage of channels with programme information), and schedule depth (how far ahead the guide extends).

Scoring: Correct AEST + 90% coverage + 48-hour depth = Excellent. Partial coverage or timezone issues result in a lower score.

3. Sports Stability (20% Weight)

Sports stability measures stream consistency during a complete live match—counting buffer events, quality drops, and audio sync issues during the full duration.

Scoring: zero buffers throughout = excellent. 1-2 buffers = Good. 3-5 buffers = Adequate. 6+ buffers = poor.

4. Catch-Up Functionality (15% Weight)

Catch-up testing verifies that replay works across major channels, assesses the time window available (24/48/72 hours), and confirms playback quality matches live stream quality.

Scoring: 48+ hours, 80%+ channels, smooth playback = Excellent. 24 hours, partial channels = Adequate. Broken or absent = Poor.

5. Server Infrastructure (15% Weight)

Server infrastructure is assessed through channel switching speed (a measure of how quickly data can be routed through the network, serving as a proxy for CDN proximity), latency measurements (the time it takes for data to travel from source to destination), and peak-hour consistency patterns.

Scoring: Switch speed under 3 seconds + consistent quality = excellent. 3-5 seconds and mostly consistent = satisfactory. Over five seconds plus variability equals poor quality.

How Do We Conduct Testing?

All testing follows a standard procedure conducted in Melbourne on Telstra NBN connections. Each service is tested over a minimum 7-day period, with structured evaluation sessions during peak hours (7-10 PM AEST) on at least 5 of those 7 evenings. Testing devices include a Fire TV Stick 4K (primary) and an Android TV box (secondary) connected via Ethernet.

Testing Protocol

OUR 7-DAY TESTING PROTOCOL

══════════════════════════════════════

SETUP: Day 0

→ Install on Fire TV Stick 4K

→ Connect via Ethernet

→ Configure Xtream Codes credentials

→ Verify EPG loading

DAYS 1-2: Baseline Assessment

→ Channel reliability survey (50 channels)

→ EPG timezone and coverage check

→ Channel switching speed measurement

→ Interface and navigation assessment

DAYS 3-5: Peak-Hour Evaluation

→ 8:00-9:30 PM viewing sessions

→ Buffer event logging

→ Quality drop recording

→ Consistency comparison across nights

DAYS 6-7: Specialist Testing

→ Live sports match evaluation

→ Catch-up replay testing

→ Multi-device testing (if relevant)

→ Final scoring and assessment

══════════════════════════════════════

Why Do We Test During Peak Hours Only?

We test exclusively during peak hours (7-10 PM AEST) because this is when 80%+ of Australian IPTV viewing occurs and when both provider infrastructure and NBN connections face maximum stress. Every IPTV service performs well at 10 AM. The meaningful quality separation happens at 8:30 PM when server load peaks, NBN congestion compounds, and the infrastructure investment—or lack thereof—becomes observable.

Peak-hour testing is the single methodological choice that most improves evaluation accuracy. A provider receiving an “Excellent” rating in our methodology has demonstrated its infrastructure handles real-world Australian viewing conditions, not laboratory-ideal scenarios.

What We Do Not Factor Into Rankings

We explicitly exclude three commonly cited factors from our rankings:

Channel count — Raw channel numbers have near-zero correlation with viewing satisfaction. A service with 3,000 reliable channels provides a better experience than one with 20,000 mostly non-functional streams.

VOD library size — VOD, or Video On Demand, is a bonus feature that allows viewers to watch content whenever they choose. IPTV’s value is live TV. Dedicated streaming platforms serve on-demand viewing better than IPTV VOD.

Technical evaluations do not consider marketing claims, provider self-descriptions, social media presence, or advertising. Only observable, measurable performance during structured testing influences our assessments.

How might you apply this methodology yourself?

Our evaluation framework is designed to be replicable. During any IPTV trial, you can apply the same five criteria with the same scoring thresholds. Test 50 channels at 8 PM for reliability. Check EPG timezone accuracy. Watch a complete live match for sports stability. Try replaying yesterday’s program for a catchup. Consider switching channels to assess the infrastructure. The results will give you a reliable quality picture of any IPTV service on your specific connection.

For the evaluation protocol you can follow during a trial, see our provider assessment framework.

Frequently Asked Questions

How often do you update IPTV rankings?

We re-test services quarterly to account for infrastructure changes, new provider entries, and shifts in service quality. IPTV provider quality can change significantly over months as infrastructure is upgraded or degraded, subscriber bases grow, and content sources change. Our methodology remains consistent across updates to ensure comparable results. See our Best IPTV Australia guide for current assessments.

Could you please explain why channel count isn’t factored in?

Channel count does not predict viewing satisfaction. In our analysis, the correlation between listed channel count and channel reliability was negative—providers with the highest counts often had the lowest percentage of functional channels. A provider maintaining 3,000 channels at 95%+ uptime delivers a dramatically better experience than one listing 20,000 channels at 70% uptime.

Can I trust IPTV reviews online?

You should approach online IPTV reviews cautiously, as many of them are affiliate-driven, receiving paid commissions for referrals instead of being independent evaluations. Our methodology favours measurable, reproducible tests over subjective impressions. The most reliable way to evaluate an IPTV service is to test it yourself using the framework described in this guide.

Why is sports weighted equally to EPG in your methodology?

Sports stability receives 20% weight (equal to EPG quality) because sports is the primary subscription driver for Australian IPTV viewers and the content type that most severely tests provider infrastructure. A service that maintains HD stability during a live AFL match has demonstrated infrastructure quality that benefits all viewing—not just sports.

Conclusion

Our IPTV ranking methodology prioritises what Australian viewers experience during their actual viewing hours—channel reliability, EPG quality, sports stability, catch-up functionality, and server proximity, all measured during prime-time peak conditions. This framework is designed to be transparent, replicable, and focused on measurable quality indicators rather than marketing metrics. We encourage every viewer to apply these same criteria during their own trial evaluations, using our published methodology as a guide to making informed subscription decisions.